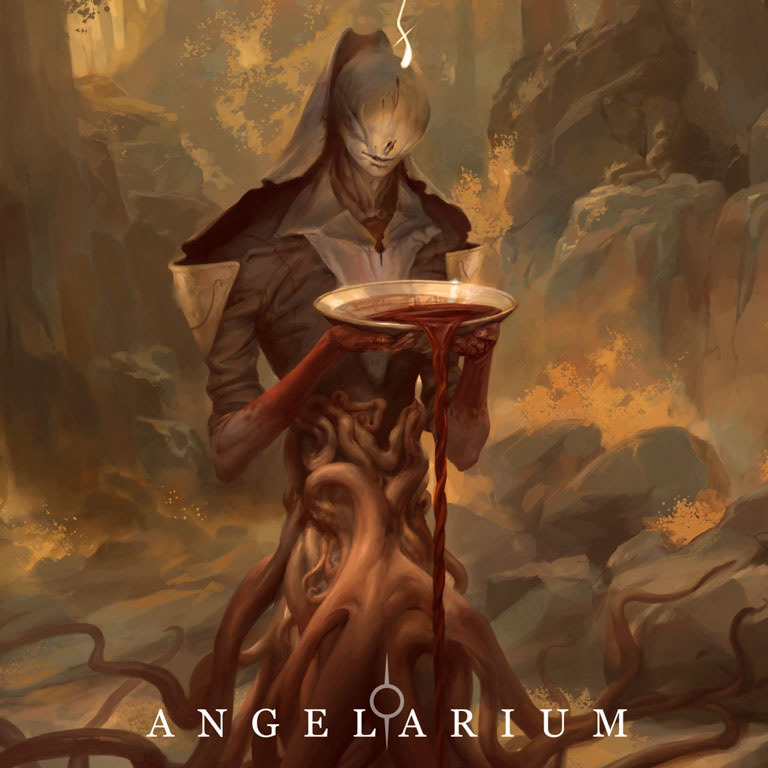

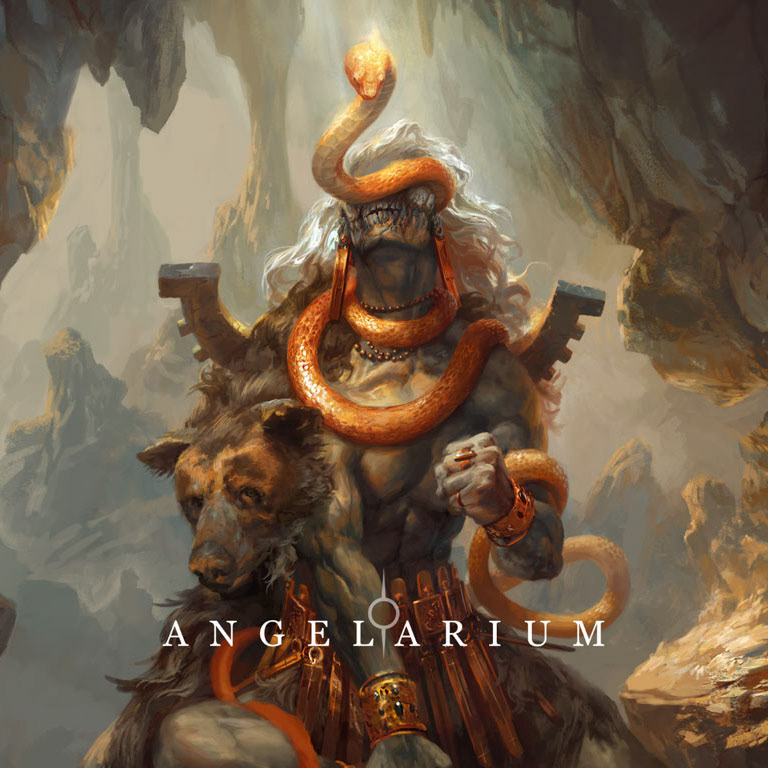

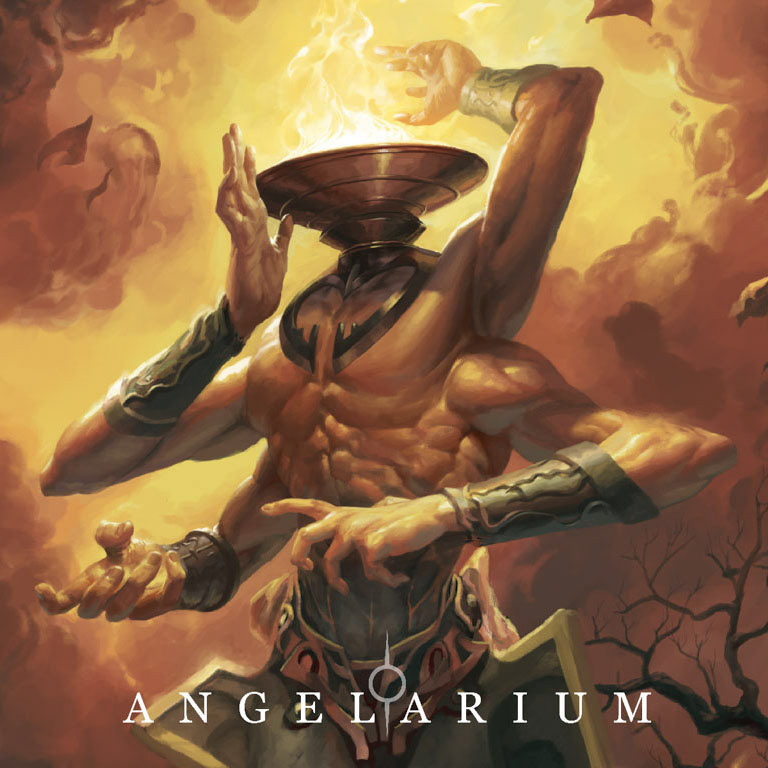

the images above were the first batch generated using a custom stablediffusion model, which I trained on a database of beautiful, ethereal, hand-drawn illustrations of seraphim by the talented Mr. Peter Mohrbacher. All credit to the original artist, if you like what you see please support his work by visiting:

www.angelarium.net

so, the problem is that computer vision models love seeing people and clouds, because the datasets they're trained on are full of them. presumably, it "thinks" something like:

foreground might be a person? --> definitely a person.

top half of a photo might be clouds? --> definitely clouds.

foreground might be a person? --> definitely a person.

top half of a photo might be clouds? --> definitely clouds.

when it comes to drawing them, though:

feathery-winged thing in front of clouds --> not a bird?

person-shaped thing holding weapon, surrounded by swirly stuff --> not a monster?

feathery-winged thing in front of clouds --> not a bird?

person-shaped thing holding weapon, surrounded by swirly stuff --> not a monster?

Some examples from early on in training illustrate the problem:

to help teach it, i used a colab implementation of kohya-ss' training script.

first, 108 images were cropped to 768x768 and tagged with booru anime tagger.

some examples of what it saw in the images above:

"1boy, bird, wings, horns, weapon, sky, sword, cloud,

tattoo, muscular, moon, feathered wings, scarf,

...extra arms, monster boy, tentacles, eldritch abomination"

first, 108 images were cropped to 768x768 and tagged with booru anime tagger.

some examples of what it saw in the images above:

"1boy, bird, wings, horns, weapon, sky, sword, cloud,

tattoo, muscular, moon, feathered wings, scarf,

...extra arms, monster boy, tentacles, eldritch abomination"

The training details:

LoRa trained on SD1.5, 32 dim 16 alpha

108 images x 4 repeats x 16 epochs / 2 batch size = 3,456 steps

unet learning rate 2e-4, text encoder 1e-4

trained for an hour or two (courtesy of google) on an A100

with lots of cores and teraflops and stuff.

LoRa trained on SD1.5, 32 dim 16 alpha

108 images x 4 repeats x 16 epochs / 2 batch size = 3,456 steps

unet learning rate 2e-4, text encoder 1e-4

trained for an hour or two (courtesy of google) on an A100

with lots of cores and teraflops and stuff.

and we're making some progress. It's got the clouds down pretty well! And abstract shapes! :)